Getty Images/iStockphoto

Containers vs. VMs: What are the key differences?

Containers are a valuable option for deploying applications, but they have limitations and operate differently than VMs.

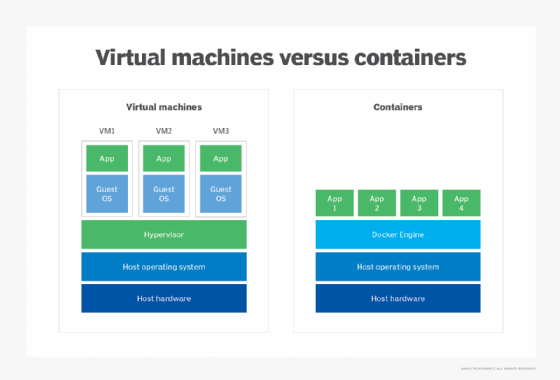

The difference between containers and VMs is primarily in the location of the virtualization layer and the way OS resources are used. Containers and VMs are simply different ways of provisioning and using compute resources -- processors, memory and I/O -- that are already present in a physical computer. Although the goal of virtualization is the same as containers, the approach is notably different, and each approach offers unique characteristics and tradeoffs for enterprise workloads.

What are VMs?

Virtual machines rely on a hypervisor, which is a software layer installed atop the bare-metal system hardware. Such hypervisors are called Type 1 or bare-metal hypervisors. Type 1 hypervisors, such as VMware vSphere ESXi and Microsoft Hyper-V, are perceived as OSes in their own right. Once administrators install the hypervisor layer, they can provision VM instances from the system's available computing resources. Each VM can then receive its own unique OS and workload. Thus, VMs are fully isolated from one another -- no VM is aware of or relies on the presence of another VM on the same system -- and malware, application crashes and other problems affect only that VM. Admins can migrate VMs from one virtualized system to another without regard for the system's hardware or OSes.

A system can be provisioned with many VMs. Often, the first VM is the host VM, used for system management workloads, such as Microsoft System Center. Subsequent VMs contain other enterprise workloads, such as database, ERP, CRM, email server, media server, web server or other business applications. VMs are characterized by several common features or characteristics:

- Isolation. Every VM is logically isolated from every other VM. VMs aren't aware of each other's presence and don't share software components.

- Compatibility. VMs are fully compatible with all standard x86 OSes, applications and other software components, so VMs can run any software that runs on a physical x86 computer.

- Portability. VMs can be migrated from one virtualized computer to another virtualized computer.

Benefits of VMs

VMs have become a de facto standard for enterprise virtualization over the last 20 years, and they bring numerous advantages to the business, including the following:

- Independence. The isolation of every VM means faults and failures in the VM's OS or application don't affect any other VM on the same computer or other computers in the data center environment.

- Resource utilization. Because multiple VMs can be provisioned and deployed on the same physical computer, a system can effectively host multiple workloads without having to buy multiple computers. This enables server consolidation in the data center, leading to savings in hardware costs, power, cooling and space.

- Availability. The portability of VMs enables VM work to be balanced across multiple systems for better performance and supports system maintenance tasks. VMs can also be copied to or restored from files, enabling fast VM protection and recovery.

- Flexibility. Every VM requires its own OS, but each OS can be different. This enables a business to use multiple OSes on the same physical computer, which isn't possible on bare-metal systems.

- Security. Hypervisors and the logical isolation they provide have proven secure for VMs. Although one VM can be compromised, it doesn't expose other VMs.

VM drawbacks

Despite the significant benefits, VMs also carry several drawbacks:

- Size. VM instances can be large, involving multiple processors and significant amounts of memory. This works well for enterprise-class workloads, but there is a practical limit to the number of VMs that can be deployed on any one computer.

- Time. VMs can take from several seconds to several minutes to provision and deploy. Although this isn't much time in human terms, VMs might not scale quickly enough to meet dynamic or short-term computing needs where resource elasticity is important.

- Software licensing. Every VM needs an OS and a workload, so the cost of OS and application licensing can become significant. A business must manage VM deployment carefully to control licenses and ensure VMs with costly OS and application licenses are running and doing productive work for the business, avoiding VM sprawl.

What are containers?

Containers are logical instances provisioned from system hardware resources that have been virtualized by container engine software. Each container can then be loaded with a software application and its associated dependencies and can redeploy easily between physical systems where a suitable container engine is present.

The virtualized container environment is arranged differently. With containers, a host OS, such as Linux, is installed on the system first, and then a container layer -- typically, a container manager, such as Docker -- is installed atop the host OS. The container manager provides the hypervisor for containers. The approach is almost identical to hosted or Type 2 hypervisors.

Once admins install the container layer, they can provision container instances from the system's available computing resources and deploy enterprise application components in the containers. However, every containerized application shares the same underlying OS: the single host OS. By comparison, every VM gets its own unique OS. Although the container layer provides a level of logical isolation between containers, the common OS can present a single point of failure for all containers on the system. As with VMs, containers are also easily migrated between physical systems with a suitable OS and container layer environment.

Benefits of containers

Containers offer their own unique features and characteristics:

- Size. Containers share a single common OS kernel and don't employ unique OSes of their own, so containers are much smaller logical entities than VMs. This enables a computer to host far more containers simultaneously than VMs.

- Speed. The small size of container instances enables them to be created and destroyed far faster than VMs. This makes containers well suited to fast scalability and short-term use cases that might be impractical for VMs.

- Unique hypervisor. Containers rely on specialized hypervisor platforms for hosting and management, such as Docker, rkt and Apache Mesos.

- Immutability. Unlike VMs, containers don't move. Instead, the container orchestrator in the container software layer starts and stops containers when they are needed. Similarly, the software that runs in containers isn't updated or patched like traditional software. Rather, the updates are incorporated into a new container image, which can be redeployed where needed.

Container drawbacks

Containers have enabled enormous scalability and flexibility for enterprise organizations, but there are several disadvantages:

- Performance. There can be a lot of containers, and containers share a common OS in addition to the container layer. This means containers use resources efficiently, but there can be some contention between containers attempting to access hardware resources, such as storage or networks, through the common OS. Such contention can affect overall container performance.

- Compatibility. Containers packaged for one platform, such as Docker, might not work with other platforms. Similarly, some container tools might not work with multiple container platforms. For example, Red Hat OpenShift only works with the Kubernetes orchestrator. Consider the container ecosystem when evaluating container technologies for the enterprise.

- Storage. Containers are natively designed to be stateless -- the data in a container disappears when the container does. There are ways to provide persistent storage for containers, such as Docker data volumes, but persistent container storage isn't a native part of container technology and is often treated as a separate issue.

- Suitability. Containers are typically small and nimble entities best suited for application components or services such as microservices. Full-featured enterprise applications don't work well in containers. Consider the suitability when planning container deployments.

Compare containers and VMs

Containers and VMs each possess unique characteristics and tradeoffs, but there are many dimensions to consider when selecting a virtualization technology. The following list summarizes some of the most common comparisons:

- Deployment. Both containers and VMs can be deployed by loading a desired image file into a corresponding virtual instance. An image file packages all the components needed to start a VM or container. VMs can also be deployed manually, as they act as independent computers.

- Fault tolerance. Both containers and VMs support fault-tolerant techniques, such as load-balanced clustering, and both can be readily recovered or restarted from their respective image files. Containers are natively stateless and usually carry little risk of data loss when a fault occurs. VMs are naturally persistent and can potentially suffer some amount of data loss when a fault occurs.

- Isolation. VMs have complete logical isolation. VMs aren't aware of any other VM in the environment -- even though other VMs might be running on the same physical computer. Containers have less isolation because containers share a common OS kernel.

- Load balancing. VMs enable live migration and can be migrated from one virtualized computer to another to balance computing and networking loads. Containers don't move but are recreated on target computers before destroying the original instance. Thus, containers readily support immutable infrastructure concepts.

- Networking. Both containers and VMs can communicate across instances, either on the same computer or between computers connected by an IP network. The high volume of containers possible in a data center environment might strain LAN bandwidth quicker than fewer VMs.

- Performance. Both containers and VMs provide excellent performance at close to bare-metal speeds. VMs have a slight performance edge because VMs don't face the potential contention of a shared OS.

- Persistent storage. VMs include persistent storage with a virtual hard disk. Containers natively work from memory and require careful storage tools, such as Docker data volumes, to preserve container data once the container is destroyed.

- Portability and compatibility. VMs share common virtualization technologies, often based on or built from open source components. VMs can be portable and compatible with systems employing similar virtualization platforms. Containers are highly portable, and the component packaging incorporated into container image files can enable containers to run on almost any system that supports the container platform. However, container platforms aren't completely compatible.

- Resources. Containers use fewer computing resources and typically host smaller applications than VMs, so an enterprise can potentially fit far more containers on a single computer.

- Scalability. Containers are smaller and require fewer resources than VMs, so containers can scale -- be created or destroyed -- much faster than VMs. Containers are often employed where short-term compute instances are required.

- Security. VMs use separate OSes and applications, so a security flaw in one VM doesn't translate to other VMs in the environment. Containers share a common OS and can be affected by flaws in the OS, so they aren't as secure as VMs. However, containers can be operated within a VM in a hybrid deployment approach, which can help contain potential security vulnerabilities.

- Updates and upgrades. VMs act as independent, fully functional computers. VM contents, such as OSes, drivers and applications, can be patched, updated and upgraded through traditional techniques. Containers use a fixed package of libraries and binaries that are created to run on container platforms, such as Docker. Containers can't be readily upgraded or updated live. Instead, container files are updated and versioned, and the new image file can be redeployed to implement the updates. This also supports immutable infrastructure concepts.

Containers vs. VMs: Security issues

VMs are regarded as the most secure and resilient platform for workloads. Hypervisor technologies are well proven, and the logical isolation that hypervisors provide between VMs ensures every VM exists as its own separate logical server with its own OS and drivers. However, all the elements running in and around the VM -- the OS, application, drivers, authorization and authentication, and network traffic -- are subject to security flaws that must be constantly addressed just as they would in any traditional physical deployment. When the highest level of isolation is required for security, VMs have the edge.

Containers are nimble and fast, but all containers run atop a common OS. This is technically fine and well proven, but any bugs or security flaws in the OS can potentially expose all the containers running through the common OS kernel. The underlying OS kernel poses a single point of vulnerability. As a minimum, systems used for containers typically employ a hardened OS. Admins only apply OS security updates and patches after extensive testing and validation. And security tactics, such as intrusion detection and prevention, are typically implemented to guard the server. Security can be augmented by running groups of containers in VMs, mixing the benefits of containers with the enhanced isolation of VMs.

Containers, VMs or both: Choosing the best option

Instance choice is driven by architectural goals. Traditional monolithic applications -- still quite common and appropriate for many enterprise applications -- work well for VMs and common high availability techniques, such as clustering. Such traditional applications are often intended to run for long durations and use the strong security that VM isolation can provide.

By comparison, application components and services can function quite well in containers where characteristics such as fast startup, elasticity and high scalability -- and, potentially, short operational times -- can work to the containers' advantage. But containers are increasingly driven by emerging software design models, such as microservices architectures. Microservices enable an application's design to be broken up, developed, deployed and supported as separate functional components, each in a fast, highly scalable container that can also be independently scaled and migrated. Thus, containers have become attractive for flexible and scalable application designs.

The choice of containers vs. VMs isn't a mutually exclusive one. Containers and VMs can readily coexist in the same data center environment -- and even on the same server -- so the two technologies are considered complementary, expanding the available tool set of application architects and data center administrators to provide unique advantages for the most appropriate workloads. The trick, then, is matching the virtual instance to the right workload.

Stephen J. Bigelow, senior technology editor at TechTarget, has more than 20 years of technical writing experience in the PC and technology industry.