Full virtualization vs. paravirtualization: Key differences

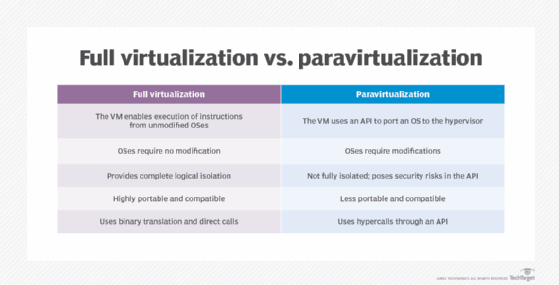

Full virtualization and paravirtualization both enable hardware resource abstraction, but the two technologies differ when it comes to isolation levels.

The idea behind all virtualization is to abstract a computer's hardware resources from the software that uses those resources. A hypervisor is a software tool installed on the host system to provide this layer of abstraction. Once a hypervisor is installed, OSes and applications interact with the virtualized resources abstracted by the hypervisor -- not the physical resources of the actual host computer.

There are different types of virtualization based on the level of isolation provided: full virtualization vs. paravirtualization.

What is full virtualization?

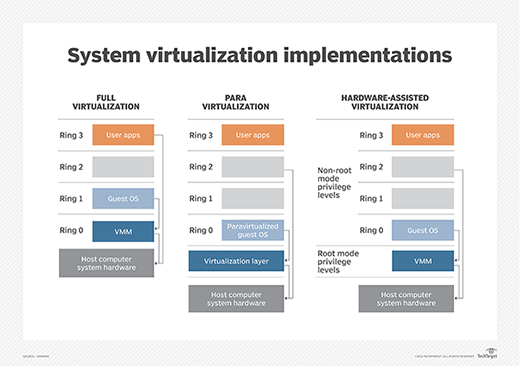

Virtualization is often approached as full virtualization. That is, the hypervisor provides complete abstraction through a software-based virtualization layer, and assigns abstracted resources to one or more logical entities called virtual machines (VMs). This is typically referred to as Type 1 virtualization and is seen in products such as VMware ESXi. Each VM and its guest OS work as though they run alone on independent computers, and the OSes and applications require no special modifications or adaptations to operate in a typical VM. Every VM is logically isolated from every other VM. VMs don't communicate or share resources unless the VMs are deliberately set up to do so -- usually through standard network intercommunications.

However, early hypervisors had a performance problem. Hypervisors rely on hardware emulation, such as a VM manager, to handle the binary translations back and forth between physical hardware and virtual resources, such as CPUs and memory spaces. This constant translation imposes a performance penalty on the host computer. In the early days of full virtualization, this performance penalty limited the practical number of VMs that a system could host and frequently limited the types of applications that could run in a VM successfully.

These early performance problems in virtualization have long been resolved through the common use of hardware-assisted processors that incorporate extensions to the processors' instruction set, including Intel Virtualization Technology (VT) and AMD Virtualization (AMD-V) extensions. Today, full virtualization operates at hardware speeds and offers excellent performance for server virtualization in enterprise production environments. This has caused paravirtualization to fall into disuse, though it's still important to understand what paravirtualization is, and how it fits into the available spectrum of virtualization technologies.

Benefits of full virtualization

Full virtualization (Type 1) doesn't require OS assistance to virtualize a computer or create VMs. This allows IT administrators to run OSes and applications without any modifications. This is because the hypervisor fully manages resources and translates instructions quickly. The hypervisor also enables the OS to emulate new hardware, which can improve reliability, security and productivity in a system.

Full virtualization enables admins to run applications on completely isolated guest OSes, which provides support for multiple OSes simultaneously -- such as Windows Server 2016 in one VM, Windows Server 2019 in another VM, and Ubuntu Linux in yet another VM -- all on the same computer. Full virtualization also provides other features, such as easy VM backups and migrations, enabling VMs to be easily moved from one computer to another without disrupting the VM and its workload. This kind of flexibility enables organizations to reduce hardware costs and simplify system hardware maintenance.

Disadvantages of full virtualization

Despite virtualization's broad adoption and continued success, there are some drawbacks to the technology. The use of hypervisors and hardware-assisted processors offers excellent performance compared to bare-metal (nonvirtualized) OS and application deployments, but the hypervisor itself still adds a layer of additional complexity to the technology stack that an organization must procure, license, implement and manage.

Applications that require direct access to the underlying computer's hardware won't function properly in a VM. Today, such applications are exceedingly rare and, typically, represent a minuscule minority of legacy applications. Even in extreme cases where a legacy application can't be updated, it can continue operating on a dedicated server and shouldn't affect the adoption and use of full virtualization for other enterprise workloads.

IT professionals must consider availability and risk in VM deployments. In bare-metal environments, a server fault will affect one workload. In a virtualized environment, a physical server fault or failure can affect every VM running on the system. For example, if a server running five VMs should fail, all five of those workloads will fail as well and must be recovered. Multiple VMs on the same system can also potentially congest the system's available LAN bandwidth. This makes load balancing, protection and recovery schemes very important in virtualized data centers.

What is paravirtualization?

Para means alongside or partial, and paravirtualization gained attention as one potential answer to full virtualization's early performance issues. Paravirtualization sought to bolster early virtualization performance by enabling an OS to recognize the presence of a hypervisor. Products such as IBM LPAR enabled the OS to communicate directly with that hypervisor to share activity that would otherwise be complex and time-consuming for the hypervisor's VM manager to handle. Commands sent from the OS to the hypervisor are dubbed hypercalls. At the same time, the OS can still talk to and manage the underlying hardware layer -- that's the para or partial angle.

For paravirtualization to work, admins must modify or adapt the guest VM OSes to implement an API capable of exchanging hypercalls with the paravirtualization hypervisor. Typically, a paravirtualized hypervisor, such as Xen, requires OS support and drivers built into the Linux kernel and other OSes.

Unmodified, proprietary OSes, such as Microsoft Windows, won't run in a paravirtualized environment, although paravirtualization-aware device drivers might be available to enable an unmodified OS to run on a Xen hypervisor. Admins must modify the OS to communicate with the hypervisor, but the applications themselves don't require any modifications.

Benefits of paravirtualization

Paravirtualization relies on direct communication between the guest OS kernel and the underlying hypervisor in a system. In the early days of virtualization, it could offer improved performance levels and system utilization compared to full hypervisors without the benefit of hardware-assisted processors. Paravirtualization also promised easier backups, faster migrations, improved server consolidation and reduced power consumption compared to hypervisors on legacy hardware.

Today, the benefits of paravirtualization are largely mitigated by its disadvantages when compared to full virtualization running on modern hardware with hardware-assisted processors.

Disadvantages of paravirtualization

Despite paravirtualization's early benefits, it also carries some important criticisms. Because admins must modify the OS, it limits the use of paravirtualization to OS versions -- most open source -- that are properly modified and validated for such use, and therefore limits the number of OS options available for an enterprise. Major proprietary OSes, such as Windows Server, simply don't support paravirtualization.

Paravirtualization also requires a hypervisor and modified OS capable of communicating with each other through APIs. This direct communication creates a tight dependency between the OS and hypervisor, potentially resulting in version compatibility problems where a hypervisor or OS update might break the virtualization. The intentional communication could also pose possible security vulnerabilities to the system. There is simply more to go wrong.

Another disadvantage of paravirtualization is the inability to predict performance gains. Many of paravirtualization's benefits vary depending on the workload. Essentially, the number of paravirtualization APIs and the amount of compute those APIs receive from the system determines the benefits workloads receive.

Key differences between full virtualization and paravirtualization

Paravirtualization attempts to accomplish the same goals as Type 1 (full) virtualization. But paravirtualization modifies the OS as a workaround, which is often undesirable because it makes virtualization dependent on the OS. Any OS patches or updates -- or even a need to switch to a different OS -- can cripple virtualization on that system. Type 1 virtualization provides total isolation and leaves virtualization completely independent of any other OSes running on the system. In fact, a host OS is unnecessary when running Type 1 virtualization. The Type 1 hypervisor itself effectively acts as the host OS.

Where paravirtualization attempts to implement a virtualization layer below a modified host OS, Type 2 (guest) virtualization simply adds a hypervisor as an ordinary application installed above a standard unmodified OS. Once a Type 2 hypervisor is installed, admins can create VMs as needed. Type 2 virtualization is the foundation of container virtualization using a specialized hypervisor called a container engine. Type 2 hypervisors and container engines can share a host OS, but again, the host OS requires no modifications and doesn't create an undesirable dependency for VMs.

Understanding hardware-assisted virtualization

Full virtualization works by using a software hypervisor to abstract a computer's hardware resources -- memory, processors, network I/O -- and provide logical representations of those resources to logical VM instances. This imposes another layer of software used to manage resources and handle the translation between logical and physical resources. In the early days of virtualization, this continuous translation between physical and logical resources imposed a serious performance penalty that limited the number of VMs that a hypervisor could practically create and support on a computer.

Computer hardware designers quickly realized that many of the time-consuming processes needed to handle full virtualization could be vastly accelerated through the addition of specific microprocessor instructions, rather than using software to emulate those functions outside of the processor. The addition of these new instruction sets was dubbed hardware-assisted virtualization.

Starting in 2005, major microprocessor vendors Intel and AMD added sets of new instructions to their processor families designed specifically to accelerate the tasks associated with virtualization. Intel called these extensions Intel VT, while AMD named the extensions AMD-V. Today, virtually all microprocessors, with perhaps a few exceptions for dedicated or task-specific microcontrollers, support virtualization instruction sets.

Full virtualization vs. paravirtualization: The verdict

Today, most general-purpose microprocessors intended for enterprise-class servers now include either Intel or AMD extensions for hardware-assisted virtualization. This effectively eliminated the performance penalties associated with full virtualization and enabled Type 1 full virtualization to become the preeminent approach to enterprise virtualization. Even Type 2 virtualization and containerization -- through a shared host OS -- can approach native hardware performance using modern microprocessors.

Combined with the benefits of full virtualization isolation and the ability to use any OS without modification, paravirtualization hasn't gained much traction in enterprise data centers. This helped full virtualization become the de facto standard for much of the industry, as opposed to paravirtualization, which is generally relegated to experimental and niche use cases.

Stephen J. Bigelow, senior technology editor at TechTarget, has more than 20 years of technical writing experience in the PC and technology industry.