hardware virtualization

What is hardware virtualization?

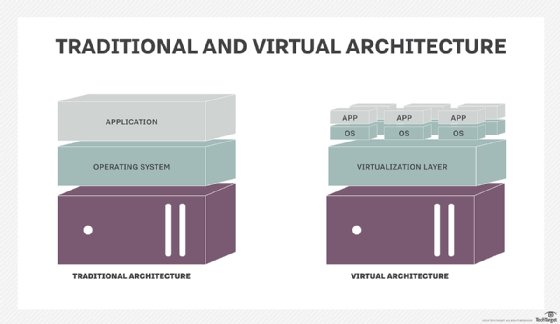

Hardware virtualization, which is also known as server virtualization or simply virtualization, is the abstraction of computing resources from the software that uses those resources.

In a traditional physical computing environment, software such as an operating system (OS) or enterprise application has direct access to the underlying computer hardware and components, including the processor, memory, storage, certain chipsets and OS driver versions.

This posed major headaches for software configuration and made it difficult to move or reinstall software on different hardware, such as restoring backups after a fault or disaster.

The history of hardware virtualization

The term virtualization was coined in the late 1960s to the early 1970s, stemming from IBM's and MIT's research and development on shared compute resource usage between large groups of users. IBM had hardware systems spanning different generations and would run programs in batches to accomplish multiple tasks at once. IBM began working on the development of a new mainframe system when it was announced that MIT had started working on a Multiple Access Computer (MAC).

Seeing the attention around MAC, IBM soon changed direction and developed the CP-40 mainframe, which then led to the CP-67 mainframe system, the first commercially available mainframe that supported virtualization. The control program created virtual machines (VMs), which ran on a mainframe that the end user would interact with.

Virtualization became standard technology with mainframe systems but fell into disuse as mainframes gave way to personal and client/server computing in the late 1970s and into the 1980s. As the number of individual and underutilized data center systems became difficult to power and cool effectively in the late 1990s and into the early 2000s, developers with renewed interest in virtualization began creating more hypervisor platforms. In 1997, the first Virtual PC was added on the Macintosh platform.

One year later, VMware released VMware Virtual Platform for the A-32 architecture. Advanced Micro Devices (AMD) and Virtutech released Simics/x86-64 in 2001, which supported 64-bit architectures for x86 chips. In 2003, the University of Cambridge released its x86 hypervisor, Xen.

In 2005, Sun Microsystems released Solaris OS, which included Solaris Zones, a containerization product. In 2008, a beta version of Microsoft's Hyper-V was released. In March of 2013, Docker Inc. released its namesake containerization product for x86-64 platforms.

How hardware virtualization works

Hardware virtualization installs a hypervisor or virtual machine manager (VMM), which creates an abstraction layer between the software and the underlying hardware. Once a hypervisor is in place, software relies on virtual representations of the computing components, such as virtual processors rather than physical processors. Popular hypervisors include VMware's vSphere, based on ESXi, and Microsoft's Hyper-V.

Virtualized computing resources are provisioned into isolated instances called VMs, where OSes and applications can be installed. Virtualized systems can host multiple VMs simultaneously, but every VM is logically isolated from every other VM. This means a malware attack or a crash of one VM won't affect the other VMs. Support for multiple VMs vastly increases the system's utilization and efficiency.

For example, rather than buying 10 separate servers to host 10 physical applications, a single virtualized server could potentially host those same 10 applications installed on 10 VMs on the same system. This improved hardware utilization is a major benefit of virtualization and supports enormous potential for system consolidation, reducing the number of servers and power use in enterprise data centers.

Since a hypervisor or VMM is installed directly on computing hardware and other OSes and applications are installed later, hardware virtualization is often referred to as bare-metal virtualization. This has led to hypervisors being deemed OSes in their own right, though a virtualized server will usually deploy a VM with a host OS, such as Windows Server, and management tools will run the server before creating other VMs to host actual workloads.

The alternative to a bare-metal approach involves installing a host OS first and then installing a hypervisor atop the host OS. This is known as host virtualization and has largely been abandoned for VMs, though modern container virtualization has resurrected this approach.

Hypervisors rely on command set extensions in the processors to accelerate common virtualization activities and boost performance. The tasks needed to translate physical memory addresses to virtual memory addresses and back weren't well-served with pre-existing processor command sets, so extensions including Intel Virtualization Technology (Intel-VT) and AMD Virtualization (AMD-V) emerged to improve hypervisor performance and handle a larger number of simultaneous VMs. Almost all server-grade processors now carry virtualization extensions in the command sets.

Beyond the improved utilization of computing hardware, virtualization also improves flexibility in application deployment and protection. With a common hypervisor, VMs are no longer tied to a single server the way that a physical application might be tied to a traditional server installation.

Instead, a VM on one server can be migrated to another virtualized server in the local data center or servers in any remote location while the application is still running.

This live migration enables VMs to be moved as needed to streamline server performance, in the case of load balancing, or relieve a server of its workloads in order to replace or maintain the system, yet not disrupt the applications, which can continue running on other systems.

In addition, VMs can be protected with backups and point-in-time snapshots, which can both be restored to any virtualized server without regard for the underlying hardware.

Types of hardware virtualization

Multiple types of hardware virtualization exist, with processes including full virtualization, paravirtualization and hardware-assisted virtualization.

Full virtualization: Fully simulates the hardware to enable a guest OS to run in an isolated instance. In a fully virtualized instance, an application would run on top of a guest OS, which would operate on top of the hypervisor and finally the host OS and hardware. Full virtualization creates an environment similar to an OS operating on an individual server. Utilizing full virtualization enables administrators to run a virtual environment unchanged to its physical counterpart. For example, the full virtualization approach was used for IBM's CP-40 and CP-67.

Other examples of fully virtualized systems include Oracle VM and ESXi. Full virtualization enables administrators to combine both existing and new systems; however, each feature that the hardware has must also appear in each VM for the process to be considered full virtualization. This means, to integrate older systems, hardware must be upgraded to match newer systems.

Paravirtualization: Runs a modified and recompiled version of the guest OSes in a VM. This modification enables the VM to differ somewhat from the hardware. The hardware isn't necessarily simulated in paravirtualization but uses an application program interface (API) that can modify guest OSes. To modify the guest OS, the source code for the guest OS must be accessible to replace portions of code with customizable instructions, such as calls to VMM APIs. The OS is then recompiled to use the new modifications.

The hypervisor then providea commands sent from the OS to the hypervisor, called hypercalls. These are used for kernel operations, such as management of memory. Paravirtualization can improve performance by decreasing the amount of VMM calls; however, paravirtualization requires the modification of the OS, which also creates a large dependency between the OS and hypervisor that could potentially limit further updates. For example, Xen is a product that can aid in paravirtualization.

Hardware-assisted virtualization: Uses a computer's hardware as architectural support to build and manage a fully virtualized VM. Hardware-assisted virtualization was first introduced by IBM in 1972 with IBM System/370. Creating a VMM in software imposed significant overhead on the host system.

Designers soon realized that virtualization functions could be implemented far more efficiently in hardware rather than software, driving the development of extended command sets for Intel and AMD processors, such as Intel VT and AMD-V extensions.

So, the hypervisor can simply make calls to the processor, which then does the heavy lifting of creating and maintaining VMs. System overhead is significantly reduced, enabling the host system to host more VMs and provide greater VM performance for more demanding workloads. Hardware-assisted virtualization is the most common form of virtualization.

Hardware virtualization vs. OS virtualization

OS virtualization virtualizes hardware at the OS level to create multiple isolated virtualized instances to run on a single system. Additionally, this process is done without the use of a hypervisor. This is possible because OS virtualization will have the guest OS use the same running OS as the host system. OS virtualization uses the host OS as the base of all independent VMs in a system. OS virtualization gets rid of the need for driver emulation.

This leads to better performance and the possibility to run more machines simultaneously. However, recommended LAN speeds are up to 100 Mb, and not all OSes, such as some Linux distributions, can support software virtualization. Examples of OS virtualization-based systems include Docker, Solaris Containers and Linux-VServer.

Hardware virtualization vendors and products

Vendors and products for hardware virtualization include VMware ESXi, Microsoft Hyper-V and Xen.

VMware ESXi: A hypervisor designed for hardware virtualization. ESXi installs directly onto a server and has direct control over a machine's underlying resources. ESXi will run without an OS and includes its own kernel. ESXi is the compact, and now preferred, version of VMware's ESX. ESXi is smaller and doesn't contain the ESX service console.

Microsoft Hyper-V: A hypervisor designed for hardware virtualization on an x86 architecture. Hyper-V isolates VMs in partitions, where each guest OS will execute a partition. Partitions operate in the manner of parent and child partitions. Parent partitions have direct access to the hardware, while child partitions have a virtual view of system resources. Parent partitions create child partitions using a hypercall API. Hyper-V is available for 64-bit versions of Windows 8 Professional, Enterprise, Education and later.

Xen: An open-source hypervisor. Xen is included in the Linux kernel and is managed by the Linux Foundation. However, Xen is only supported by a small amount of Linux distributions, such as SUSE Linux Enterprise Server. The software supports full virtualization, paravirtualization and hardware-assisted virtualization. XenServer is another open-source Xen product to deploy, host and manage VMs.